“We shouldn’t ask our customers to make a tradeoff between privacy and security. We need to offer them the best of both. Ultimately, protecting someone else’s data protects all of us.” Guess who said that? The answer is at the end of the article. In the meantime, we keep talking of iPhone and iOS security, following up the Apple vs. Law Enforcement – iOS 4 through 13.5 article. This time we are about to discuss some other aspects of iOS security.

The Exploits

I think you know about the renewed Apple Security Bounty program. Participants can earn up to $100,000 for a new lock screen bypass, and up to $250,000 for user data extraction.

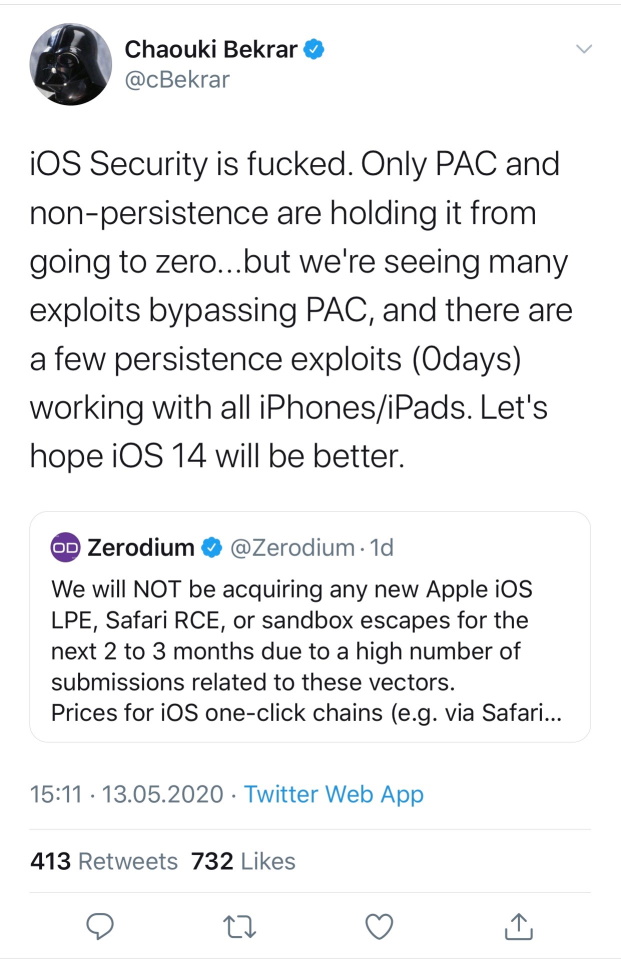

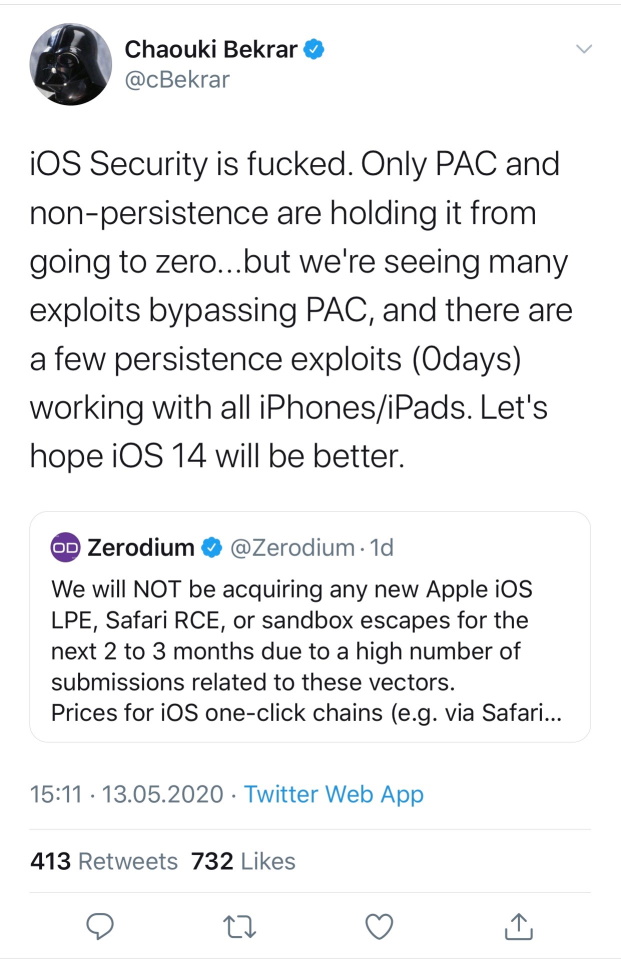

Zerodium pays more, up to $2 Million. However, in the recent statement they said the following.

We will NOT be acquiring any new Apple iOS LPE, Safari RCE, or sandbox escapes for the next 2 to 3 months due to a high number of submissions related to these vectors.

Prices for iOS one-click chains (e.g. via Safari) without persistence will likely drop in the near future.

At the same time, Apple sued Corellium, the startup that was able to emulate Apple devices running the different versions of iOS, which really helps security developers all over the world; see Apple Fights U.S. Government Intervention In iPhone Copyright Case and iPhone Research Tool Sued by Apple Says It’s Just Like a PlayStation Emulator for latest updates.

So, are there iOS exploits in the wild? For sure. Here is the information about one of the latest vulnerabilities found (covers iOS 7 through 13.4.1; patched in iOS 12.4.5 beta):

https://siguza.github.io/psychicpaper/

There are many more of those circulating without much (or any) publicity.

Cellebrite & Grayshift

Cellebrite and Grayshift are the two vendors supplying the law enforcement with iPhone unlock tools. While both companies keep their lips shut on anything remotely resembling technical details or even compatibility specifications, it does appear that the latest round is won by these guys. While Apple continues improving USB restrictions, Cellebrite and Grayshift appear to work around the data block, at least in certain cases. The A12 SoC (iPhone Xr/Xs generation) no longer has the vulnerability allowing the companies to attack passcodes on older generation devices, yet support for the new-gen iPhones is touted in forensic newsgroups. It’s hard to imagine Apple being unable to protect their devices against such attacks once and forever. Or may be they can do that, but just do not want to? Is there a reason to insert the backdoor as government wants, if some exploits exist, allowing these two companies to break the iPhone passcodes and extract the data?

Do not forget about NSO group. Despite the fact that their software is not publicly available, and the exploits they are based on are kept secret, it can be abused.

Poker face?

We never know what Apple is thinking, but it looks like they are having a poker face. For sure, Apple is technically able to develop its very own unlock tool, or at least a tool that would decrypt the file system once the company is provided an iPhone and its passcode (or perform partial file system acquisition of locked devices). Apple actively resists, turning down all requests to build unlock tools or introduce backdoors in the iPhone.

On this and many thousands of other cases, we continue to work around-the-clock with the FBI and other investigators who keep Americans safe and bring criminals to justice. As a proud American company, we consider supporting law enforcement’s important work our responsibility. The false claims made about our company are an excuse to weaken encryption and other security measures that protect millions of users and our national security.

It is because we take our responsibility to national security so seriously that we do not believe in the creation of a backdoor — one which will make every device vulnerable to bad actors who threaten our national security and the data security of our customers. There is no such thing as a backdoor just for the good guys, and the American people do not have to choose between weakening encryption and effective investigations.

Back to the quote in the first paragraph.

That was Tim Cook, who else.